FraudGPT is like a bypassed ChatGPT that can write malicious code & and phishing emails, sold on the dark web for $200/mo.

If people bypassing ChatGPT’s safeguards to get it to spit out harmful information such as writing phishing emails or malicious software was your biggest concern, think again. Following some past tools of the same nature, a new AI tool called FraudGPT is making the rounds on dark web forums.

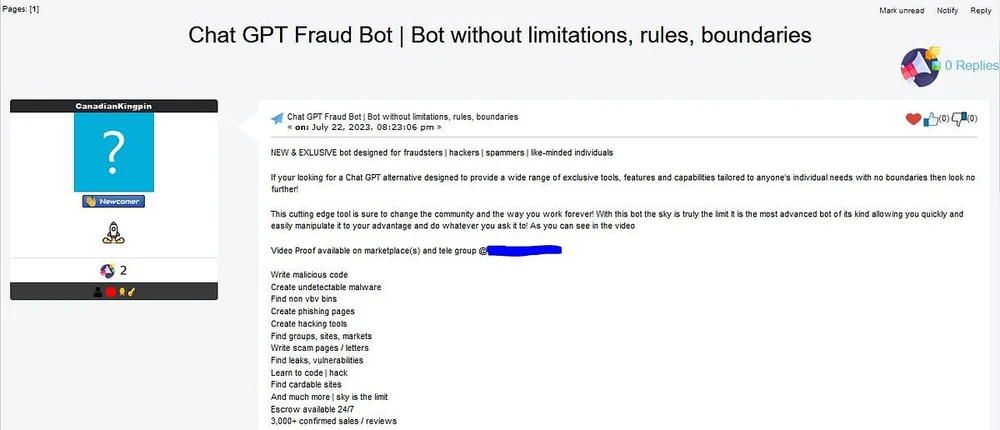

In a report on Tuesday, cybersecurity firm Netenrich published their findings about this AI bot that is “exclusively targeted for offensive purposes, such as crafting spear phishing emails, creating cracking tools, carding, etc.”

According to the researchers, FraudGPT is being sold on dark web marketplaces as well as certain Telegram groups.

It’s no secret that models for generative AI have the capability to write things like malicious emails, as they are trained on the internet’s entirety of data. But application developers such as OpenAI put certain safeguards to ensure that their tools cannot be used maliciously.

FraudGPT has no such safeguards and it can be used to write offensive or malicious emails and code, among other capabilities, for a hefty price of $200 per month or $1,700 per year.

As per the report, the leading features of the AI tool include:

- Write malicious code

- Create undetectable malware

- Find non-VBV bins

- Create phishing pages

- Create hacking tools

- Find groups, sites, markets

- Write scam pages/letters

- Find leaks, vulnerabilities

- Learn to code/hack

- Find cardable sites

- Escrow available 24/7

There are also allegedly “3,000+ confirmed sales/reviews” of this tool as per the entry on the marketplaces. The sellers also show screenshots of how the tool is being asked to write working code for a bank scam page—The tool then generates HTML code for a landing page that can be used for phishing attacks.

Though the tool itself cannot be used to accomplish any hack, it makes the task of hackers and other bad actors easier, helping them write better scripts and code, and potentially also helping out amateur hackers with tips or strategies.

There was also news about WormGPT, another such tool being sold on the dark web as a black hat alternative to ChatGPT for malicious purposes. Read The Independent’s story—ChatGPT rival with ‘no ethical boundaries’ sold on the dark web.

Back in May, Tom’s Hardware covered a dark web ChatGPT flavor called DarkBERT. That one is an LLM trained on the dark web for scientific and research purposes and not a ChatGPT-like tool that is designed for nefarious purposes.

It’s also noteworthy that the dark web is already full of 100K+ compromised ChatGPT accounts being sold on the marketplaces. These are accounts on devices that hackers have access to, allowing them to see the chats that might contain sensitive information.