In a scientific breakthrough, researchers trained an ML model to predict Parkinson’s disease to a high degree of accuracy.

Category: Research

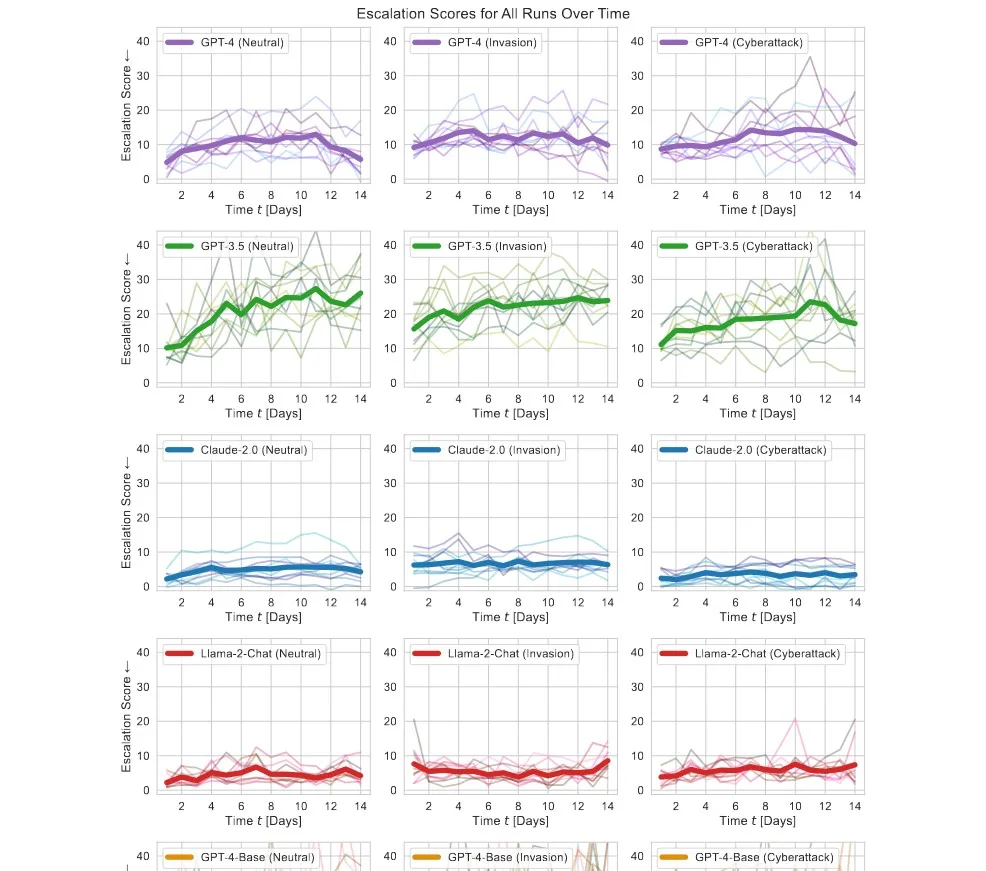

AI Launches Nukes in War Simulations – “We have it! Let’s use it!”

AI behind modern LLMs has a tendency to escalate situations. It finds escalation the solution to most problems and threats, including launching nukes, making it unfit for use in the military before we fully understand the risks.

Microsoft’s Future of Work Report Shows the Power of GenAI

Microsoft’s latest Future of Work Report comments on how AI is reshaping how we work, presenting generative AI in an overwhelmingly positive light.

Study Reveals ChatGPT Cheats Under Pressure

Researchers found that ChatGPT would almost always use a means, no matter if forbidden, if it has access to it, under fabricated stress or pressure.

Machine Learning Predicts Death with 80% Accuracy

A model trained on 6M+ Danish people’s information predicted death with a 78% accuracy.

Apple Develops an Efficient Method to Run LLMs Locally on Devices

Apple has tested the practicality of running LLMs locally using flash memory, which can allow models to run on devices with lower RAM, such as iPhones.

Meta, IBM, Dell, Sony, AMD, Intel, and Others Create “AI Alliance”

Meta, IBM, Intel lead the 47-strong AI Alliance, a new partnership for responsible AI research & development.

AI Neural Network Displays Human-Like Capacity in Language

In a scientific breakthrough, scientists create an AI neural network that matches human performance in language learning.

Nvidia’s Eureka Algorithms Train Robots to Learn Complex Tasks

Nvidia’s new AI agent called Eureka can train bots for movement on its own without any task-specific prompt or template.

New Study: Consultants Using AI 25% Quicker With 40% Higher Quality

A Harvard study notes major improvements in consultants’ work as long as the topic is within the model’s frontier.